Quick takeaways

|

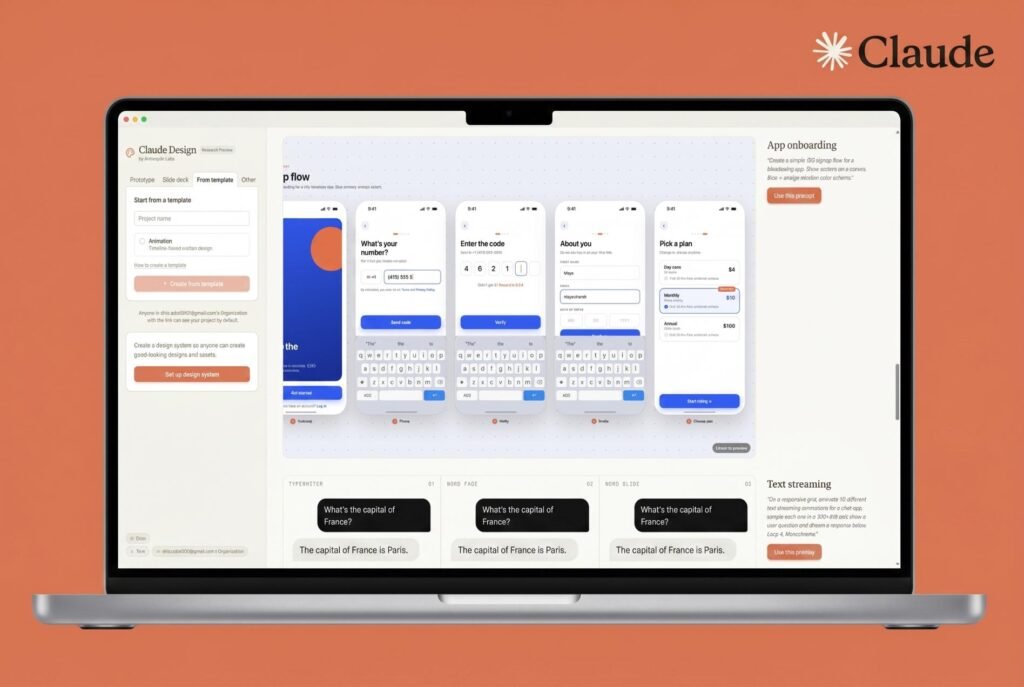

Most people pick an AI assistant based on what it can do. Fewer stop to ask why it behaves the way it does. That gap matters more than you’d think, especially when it comes to Claude design.

Claude is not just another large language model with a different name. The decisions Anthropic made when building it, from how it gets trained to how it weighs competing priorities, produce something that feels noticeably different in practice. Sometimes that’s genuinely useful. Sometimes it creates friction. Knowing the design helps you work with it better either way.

Here’s what’s actually going on under the hood.

What is Constitutional AI and why does it matter

The biggest differentiator in Claude’s design is a training method Anthropic developed called Constitutional AI (CAI). Instead of relying entirely on human feedback to teach the model what counts as a good or bad response, Anthropic gave the model a written constitution, a list of principles, and trained it to critique and revise its own outputs against those principles.

Standard RLHF (reinforcement learning from human feedback) requires humans to rate thousands of outputs. CAI uses human feedback to establish values, then uses the model itself to generate large volumes of preference data. The model reads its own response, checks whether it violates any principle, rewrites it if it does, and the improved outputs become training data. Less reliance on human raters at scale. More internalized behavior.

You see this in how Claude responds when it can’t help. It tends to explain its reasoning rather than just throwing a refusal. It pushes back on a premise rather than silently ignoring it. It sometimes asks what you actually need before deciding what to do. That’s not a behavior layer sitting on top of the model. It’s what the training produced.

📚 In plain English Most AI models learn what’s acceptable from humans giving thumbs up or thumbs down. Constitutional AI teaches the model to check its own work against a written rulebook. The values get baked in rather than enforced from outside. |

The four-layer priority system

Anthropic documented how Claude is supposed to weigh competing priorities, and it’s worth knowing because it explains behaviors that can otherwise feel inconsistent.

The hierarchy: be broadly safe first, broadly ethical second, follow Anthropic’s guidelines third, and be maximally helpful fourth. Helpfulness is never the top priority. Safety and ethics sit above it by design.

That has consequences. A request that’s completely legal and harmless but that Claude’s training flags as potentially risky can result in a refusal even when a more liberal model would just answer. On the flip side, Claude does better at saying “I’m not sure” rather than confidently hallucinating, because its training specifically penalizes confident errors.

Knowing this also tells you something practical about refusals. They’re not random. When Claude declines something, it’s because the request pattern-matches to something above the helpfulness layer in its priority stack. Adding legitimate context to a prompt often resolves a refusal because context shifts how the model interprets the request. That’s not gaming the system. It’s giving the model the information it was missing.

Claude’s priority system How Claude weighs competing goals, highest to lowest | ||

| ||

| ||

| ||

| ||

The Claude model family and what each tier is for

Claude is not a single model. Anthropic ships three distinct tiers, each built around different cost-to-capability tradeoffs.

Claude Haiku is fast, cheap, and built for volume: classification, simple summarization, customer-facing chatbots where you’re running thousands of queries an hour. The intelligence ceiling is lower, but the speed-to-cost ratio is hard to beat for those use cases.

Claude Sonnet is what most people interact with day to day. It handles complex writing tasks, analysis, and coding without becoming prohibitively expensive at scale. Anthropic updates this tier frequently, which sometimes means behavioral shifts between versions that developers need to track.

Claude Opus is the flagship. Slower, costs more per token, handles complex reasoning and extended context windows better. The most recent release, Claude Opus 4.7, landed with some performance debates in the developer community that are worth reading before you decide whether Opus fits your workflow.

💡 Pro tip If you’re building a production workflow and cost matters, run your tasks on Sonnet first and only escalate to Opus for the subset that genuinely needs deeper reasoning. Most everyday tasks don’t require the flagship. |

How Claude handles context and memory

Claude does not have persistent memory between conversations by default. Every session starts clean unless memory features are explicitly enabled through the product or API. That’s an intentional design choice, not a missing feature.

Within a single session, Claude maintains a context window that can be quite large depending on the version. You can paste in long documents, reference earlier parts of a conversation, build on things you established at the start. But once you open a new conversation, that context is gone entirely.

From a privacy and predictability standpoint, this makes sense. A model that doesn’t retain data between sessions can’t accidentally reference something you shared weeks ago or develop behavioral drift from accumulated history. The tradeoff is that you’re responsible for providing context at the start of each session when it matters.

For API users, context management is explicit and deliberate. You pass the full conversation history yourself with each call. No hidden state. That predictability is the point.

The honesty design: calibrated uncertainty over confident answers

Anthropic specifically trained Claude toward calibrated uncertainty rather than confident-sounding output. Other models often give you the confident-sounding answer even when their underlying confidence should be lower. Claude’s version is more honest, but it requires you to actually read the qualifiers rather than skimming past them.

The model also has a trained aversion to deception. It won’t claim to be human if asked directly, won’t pretend to have capabilities it doesn’t have, and resists generating content designed to mislead even when a request is framed innocuously. This isn’t a policy sitting in the API layer. It’s behavior that got trained in.

For content work, this plays out as resistance to writing fake reviews, misleading marketing claims, or content impersonating real people. Whether that’s limiting or useful depends entirely on what you’re building.

Claude design: what the choices look like in practice Four design decisions and how they show up day to day | ||||||||

|

Claude vs other frontier models: where the design diverges

If you’ve used multiple AI assistants, you’ve probably noticed Claude handles certain things differently. The design divergence is intentional.

The most consistent feedback from people who use multiple models is that Claude tends to give longer, more considered answers, pushes back more on requests it finds problematic, and is more willing to say it doesn’t know something. Whether that’s better or worse depends on what you need from it.

For research, analysis, and writing that benefits from nuance, those tendencies are usually an asset. For tasks where you want a fast confident answer and don’t need the model to question the premise, they can feel like friction. How Claude compares to other frontier models across different task categories is worth reading if you’re choosing between Claude, GPT, and Gemini for a specific production use case.

⚠️ Common mistake Switching models mid-workflow after a single refusal. A lot of refusals resolve with better context in the prompt. Before switching, try adding one sentence explaining your legitimate use case and see if the output changes. |

Common misconceptions about Claude’s design

Misconception: Claude is deliberately restricted to be less useful.

The safety layer is not about restricting usefulness for its own sake. Anthropic’s documentation is explicit that unhelpfulness is treated as a cost, not a safe default. Refusals that frustrate people most are usually edge cases where the model pattern-matches a request to something harmful even when the intent is benign. That’s a calibration problem, not a policy to make Claude worse.

Misconception: Constitutional AI means Claude is politically biased.

The constitution Claude trained against focuses on safety and honesty properties, not political positions. Claude declines to generate content that could cause harm, not content it disagrees with politically. On genuinely contested political questions, the model tries to present multiple perspectives rather than taking a side. That’s a design choice about epistemic humility.

Misconception: Claude has a fixed personality that can’t be adjusted.

System prompts can significantly change how Claude behaves. Operators using the API can set personas, restrict topics, adjust tone, and configure Claude for specific use cases. What can’t be changed are the hard limits baked in at the training level. But the personality, communication style, and topical focus are all configurable within those limits.

Misconception: A large context window means perfect recall within a session.

Research from groups including Stanford HAI has shown that LLMs give disproportionate weight to information near the beginning and end of the context window, with content in the middle sometimes receiving less attention. For very long sessions, restating key points near your question often gets better results than assuming the model processed everything equally.

What this means if you’re using Claude regularly

Treat Claude’s expressed confidence as real information. When it hedges, take the hedge seriously. When it answers confidently, that means something, because this is a model that was specifically trained not to sound confident when it shouldn’t be.

Provide context in your prompts. Not because you’re trying to get around safety features, but because Claude’s training means it genuinely reasons about what kind of request it’s receiving. A sentence of context changes that reasoning. You’ll get better outputs with less friction.

For anyone building on the API, Anthropic’s full model documentation covers current model names, context window sizes, and API parameters. The stateless design means you have complete control over what the model knows in any given call. No hidden state, no behavioral drift from accumulated context you didn’t provide. That’s a genuine advantage if you care about predictable production behavior.