Your model achieved 95 percent accuracy. Success, right? Not so fast. What if 95 percent of your data belongs to one class? What if the model works great on training data but fails on real-world examples? What if accuracy completely misses what actually matters for your application?

Proper model evaluation and testing separates models that actually work in production from those that just look good on paper. Many machine learning projects fail not because the models can’t learn but because evaluation was done wrong. The model optimized the wrong metric or was tested on data too similar to training data.

Model evaluation and testing requires understanding which metrics matter for your problem, how to split data properly, and what to watch for during training. Accuracy is just the beginning. Real evaluation considers precision, recall, confusion matrices, cross-validation, and whether your model generalizes to truly new data.

Why accuracy alone is misleading

Accuracy measures what fraction of predictions are correct. If your model classifies 90 out of 100 examples correctly, accuracy is 90 percent. Simple and intuitive, but often completely misleading.

Consider a fraud detection system where 98 percent of transactions are legitimate. A model that always predicts legitimate achieves 98 percent accuracy without learning anything useful. It catches zero fraud while appearing highly accurate.

This happens with imbalanced classes where one category vastly outnumbers others. Medical diagnosis, fraud detection, and spam filtering all have severe class imbalance. The minority class is often what you care most about detecting.

import numpy as np

from sklearn.metrics import accuracy_score, precision_score, recall_score

# Imbalanced dataset: 95% class 0, 5% class 1

y_true = np.array([0]*95 + [1]*5)

# Naive model: always predict class 0

y_pred_naive = np.array([0]*100)

# Smart model: actually detects some of class 1

y_pred_smart = np.array([0]*92 + [1]*3 + [0]*2 + [1]*3)

print(f"Naive model accuracy: {accuracy_score(y_true, y_pred_naive):.2%}")

print(f"Smart model accuracy: {accuracy_score(y_true, y_pred_smart):.2%}")

The naive model gets 95 percent accuracy by predicting the majority class every time. The smart model that actually detects some minority class examples might have lower accuracy but is clearly better. Accuracy fails to capture what matters.

Beyond imbalanced classes, accuracy treats all errors equally. For spam filtering, marking legitimate email as spam is much worse than letting spam through. For medical diagnosis, missing a disease is worse than a false positive. Accuracy ignores these cost differences.

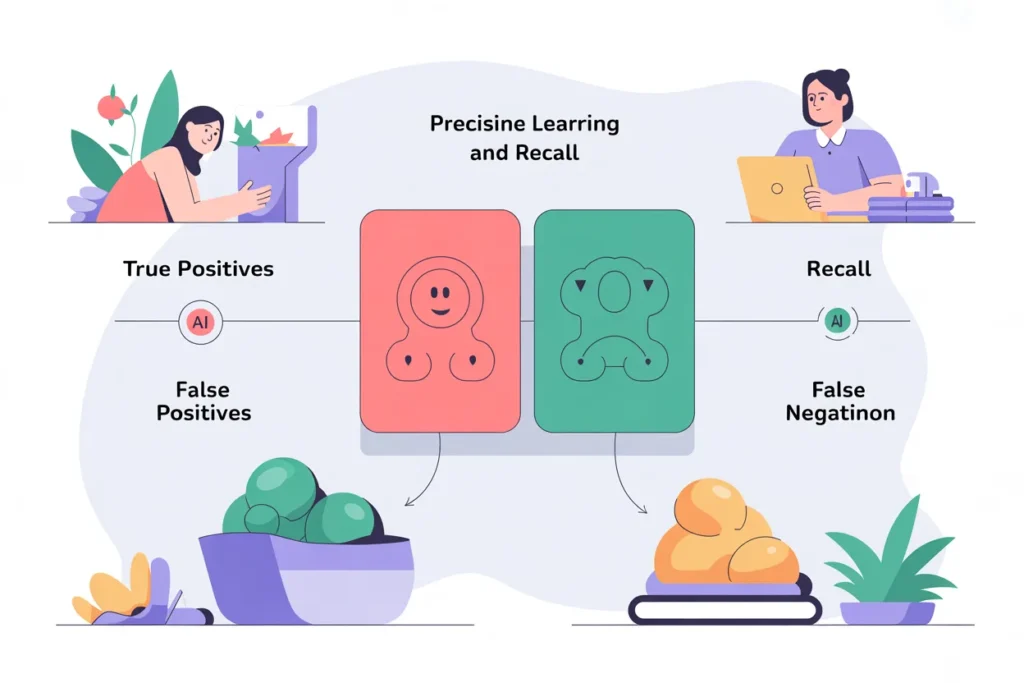

Understanding precision and recall

Precision and recall capture different aspects of classification performance that matter for different applications. Both focus on the positive class, typically the minority class you care about detecting.

Precision measures what fraction of positive predictions are actually positive. If your spam filter flags 100 emails as spam and 80 actually are spam, precision is 80 percent. High precision means few false alarms.

Recall measures what fraction of actual positives you correctly identified. If there are 50 spam emails and you caught 40 of them, recall is 80 percent. High recall means you’re catching most cases.

from sklearn.metrics import classification_report

y_true = np.array([1, 0, 1, 1, 0, 1, 0, 0, 1, 0])

y_pred = np.array([1, 0, 1, 0, 0, 1, 1, 0, 1, 0])

print(classification_report(y_true, y_pred, target_names=['Negative', 'Positive']))

There’s always a tradeoff between precision and recall. You can achieve perfect recall by predicting positive for everything, but precision plummets. You can achieve perfect precision by only predicting positive when absolutely certain, but recall suffers.

Different applications need different balances. Spam filtering prioritizes precision because false positives are costly. Cancer screening prioritizes recall because missing cases is catastrophic. Choose your focus based on real-world costs.

F1 score combines precision and recall into a single metric. It’s the harmonic mean of the two, giving a balanced measure when you care about both equally. Use F1 when you need one number but precision and recall both matter.

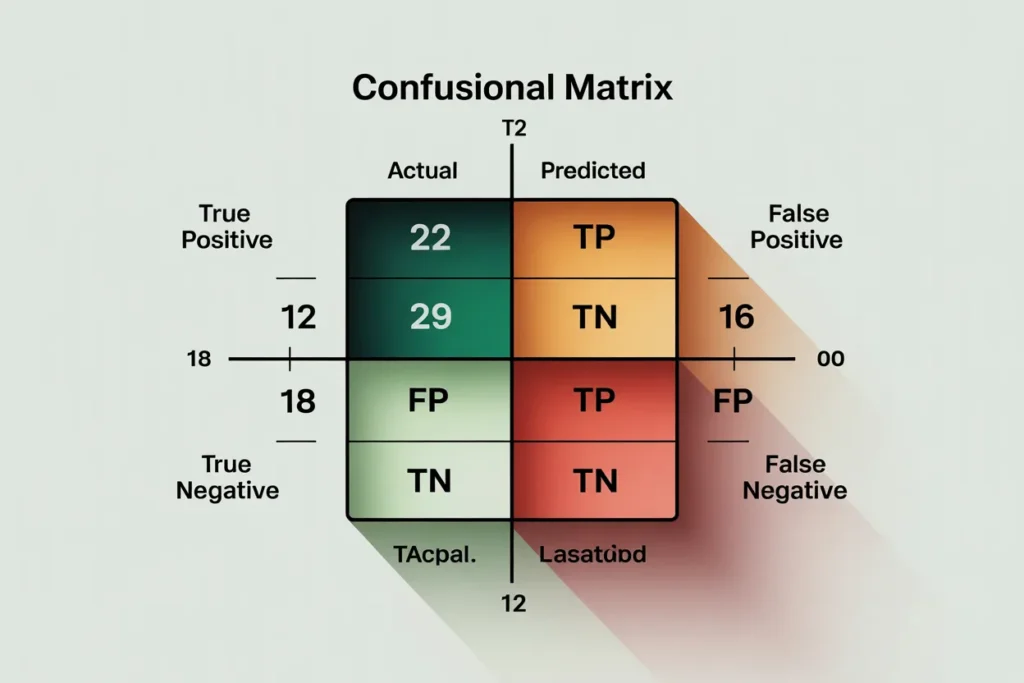

Confusion matrix shows where mistakes happen

The confusion matrix breaks down predictions into four categories: true positives, true negatives, false positives, and false negatives. This shows exactly where your model succeeds and fails.

from sklearn.metrics import confusion_matrix

import seaborn as sns

import matplotlib.pyplot as plt

# Binary classification predictions

y_true = np.array([0, 0, 1, 1, 0, 1, 0, 1, 1, 0])

y_pred = np.array([0, 0, 1, 0, 0, 1, 1, 1, 1, 0])

cm = confusion_matrix(y_true, y_pred)

print("Confusion Matrix:")

print(cm)

print(f"\nTrue Negatives: {cm[0][0]}")

print(f"False Positives: {cm[0][1]}")

print(f"False Negatives: {cm[1][0]}")

print(f"True Positives: {cm[1][1]}")

# Visualize

plt.figure(figsize=(6, 4))

sns.heatmap(cm, annot=True, fmt='d', cmap='Blues')

plt.ylabel('Actual')

plt.xlabel('Predicted')

plt.title('Confusion Matrix')

plt.savefig('confusion_matrix.png')

The confusion matrix immediately shows your model’s behavior. High false positives mean too many false alarms. High false negatives mean you’re missing cases. The distribution of errors guides improvement efforts.

For multi-class problems, the confusion matrix becomes larger but the concept remains the same. Each row represents actual class, each column represents predicted class. Diagonal elements are correct predictions. Off-diagonal elements are specific types of errors.

Train validation test split done right

Proper data splitting is fundamental to honest evaluation. Your model must prove it can handle data it has never seen before. Poor splitting leads to overly optimistic performance estimates that don’t hold in production.

The standard approach uses three splits. Training data trains the model. Validation data tunes hyperparameters and monitors training progress. Test data provides final performance evaluation after all decisions are made.

from sklearn.model_selection import train_test_split

# Sample data

X = np.random.randn(1000, 10)

y = np.random.randint(0, 2, 1000)

# First split: separate test set

X_temp, X_test, y_temp, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

# Second split: training and validation

X_train, X_val, y_train, y_val = train_test_split(

X_temp, y_temp, test_size=0.25, random_state=42, stratify=y_temp

)

print(f"Training: {len(X_train)} samples")

print(f"Validation: {len(X_val)} samples")

print(f"Test: {len(X_test)} samples")

Never touch test data until the very end. Don’t look at test performance during development. Don’t tune hyperparameters based on test results. Test data must remain completely separate to give unbiased performance estimates.

Stratified splitting maintains class proportions across splits. This is crucial for imbalanced datasets. Without stratification, random splitting might put all minority class examples in one split.

Common mistakes include training on test data, tuning to test performance, or peeking at test results during development. Any of these invalidate your test results by introducing information leakage.

Cross-validation for robust estimates

Single train-validation splits can be unlucky. Maybe your validation set happens to be easier or harder than typical data. Cross-validation provides more robust performance estimates by testing on multiple different splits.

K-fold cross-validation splits data into k parts. Train on k-1 parts and validate on the remaining part. Repeat k times using each part as validation once. Average the k validation scores for a robust estimate.

from sklearn.model_selection import cross_val_score

from sklearn.linear_model import LogisticRegression

# Sample model

model = LogisticRegression()

# 5-fold cross-validation

cv_scores = cross_val_score(

model, X_train, y_train,

cv=5,

scoring='accuracy'

)

print(f"CV scores: {cv_scores}")

print(f"Mean CV accuracy: {cv_scores.mean():.4f}")

print(f"Std CV accuracy: {cv_scores.std():.4f}")

Cross-validation gives you both a performance estimate and uncertainty measure. High standard deviation means performance varies significantly across folds, suggesting the model is sensitive to training data selection.

Five or ten folds are common choices. More folds give less biased estimates but take longer to compute. Stratified k-fold maintains class proportions in each fold.

Use cross-validation during model development and hyperparameter tuning. Reserve your test set for final evaluation after all decisions are made.

ROC curves and AUC for threshold analysis

Many classifiers output probabilities rather than hard predictions. You choose a threshold to convert probabilities to predictions. The ROC curve shows performance across all possible thresholds.

ROC plots true positive rate against false positive rate at different thresholds. The area under the ROC curve or AUC summarizes performance in one number. AUC of 0.5 is random guessing. AUC of 1.0 is perfect classification.

from sklearn.metrics import roc_curve, auc, roc_auc_score

# Probability predictions

y_true = np.array([0, 0, 1, 1, 0, 1, 0, 1])

y_proba = np.array([0.1, 0.3, 0.8, 0.6, 0.2, 0.9, 0.4, 0.7])

# Calculate ROC curve

fpr, tpr, thresholds = roc_curve(y_true, y_proba)

roc_auc = auc(fpr, tpr)

print(f"AUC: {roc_auc:.4f}")

# Plot ROC curve

plt.figure(figsize=(8, 6))

plt.plot(fpr, tpr, label=f'ROC curve (AUC = {roc_auc:.2f})')

plt.plot([0, 1], [0, 1], 'k--', label='Random')

plt.xlabel('False Positive Rate')

plt.ylabel('True Positive Rate')

plt.title('ROC Curve')

plt.legend()

plt.savefig('roc_curve.png')

ROC curves help choose operating points. If false positives are costly, pick a high threshold giving low false positive rate. If missing positives is costly, pick a low threshold giving high true positive rate.

AUC is useful for comparing models. Higher AUC means better discrimination ability across all thresholds. Use AUC when your threshold might change or when you want threshold-independent comparison.

Regression evaluation metrics

Classification gets precision, recall, and accuracy. Regression needs different metrics because you’re predicting continuous values, not classes.

Mean absolute error or MAE measures average absolute difference between predictions and actual values. It’s in the same units as your target variable, making it interpretable.

Mean squared error or MSE squares differences before averaging. This penalizes large errors more heavily. Root mean squared error or RMSE is the square root of MSE, returning to original units.

R-squared measures what fraction of variance in the target your model explains. Values range from 0 to 1, with 1 being perfect predictions. R-squared lets you compare models on different scales.

from sklearn.metrics import mean_absolute_error, mean_squared_error, r2_score

y_true_reg = np.array([100, 150, 200, 250, 300])

y_pred_reg = np.array([110, 140, 190, 260, 295])

mae = mean_absolute_error(y_true_reg, y_pred_reg)

mse = mean_squared_error(y_true_reg, y_pred_reg)

rmse = np.sqrt(mse)

r2 = r2_score(y_true_reg, y_pred_reg)

print(f"MAE: {mae:.2f}")

print(f"RMSE: {rmse:.2f}")

print(f"R-squared: {r2:.4f}")

Choose metrics that match your problem. If all errors matter equally, use MAE. If large errors are particularly bad, use RMSE. If you want scale-independent comparison, use R-squared.

Model evaluation and testing ensures your machine learning models actually work in the real world. Proper metrics, honest data splitting, and thorough evaluation catch problems before deployment. These practices separate successful ML projects from expensive failures.

Ready to apply these evaluation techniques to a complete project? Check out our spam classifier tutorial to see how proper evaluation guides model development and ensures your classifier actually catches spam without blocking legitimate emails.